I’ve been building redundant storage solutions for years. At first, I used it for our webcluster storage. Nowadays it’s the base of our CloudStack Cloud-storage. If you ask me, the best way to create a redundant pair of Linux storage servers using Open Source software, is to use DRBD. Over the years it has proven to be rock solid to me.

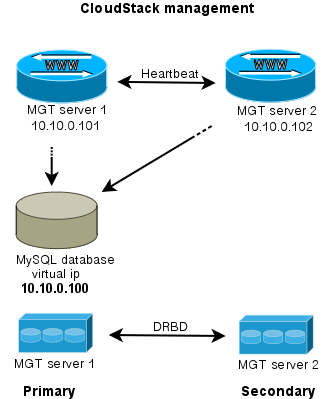

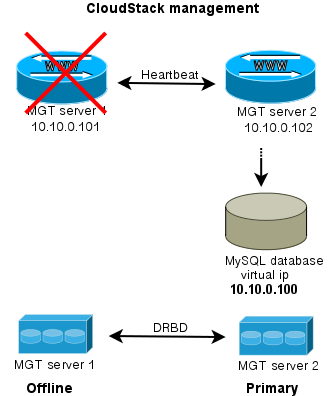

DRBD is a Distributed Replicated Block Device. You can think of DRBD as RAID-1 between two servers. Data is mirrored from the primary to the secondary server. When the primary fails, the secondary takes over and all services remain online. DRBD provides tools for failover but it does not handled the actual failover. Cluster management software like Heartbeat and PaceMaker are made for this.

In this post I’ll show you how to install and configure DRBD, create file systems using LVM2 on top of the DRBD device, serve the file systems using NFS and manage the cluster using Heartbeat.

Installing and configuring DRBD

I’m using mostly Debian so I’ll focus on this OS. I did setup DRBD on CentOS as well. You need to use the ELREPO repository to find the right packages.

Squeeze-backports has a newer version of DRBD. If you, like me, want to use this version instead of the one in Squeeze itself, use this method to do so:

echo "

deb http://ftp.debian.org/debian-backports squeeze-backports main contrib non-free

" >> /etc/apt/sources.list

echo "Package: drbd8-utils

Pin: release n=squeeze-backports

Pin-Priority: 900

" > /etc/apt/preferences.d/drbd

Then install the DRBD utils:

apt-get update

apt-get install drbd8-utils

As the DRBD-servers work closely together, it’s important to keep the time synchronised. Install a NTP system for this job.

apt-get install ntp ntpdate

You also need a kernel module but that one is in the stock Debian kernel. If you’re compiling kernels yourself, make sure to include this module. When you’re ready, load the module:

modprobe drbd

Verify if all went well by checking the active modules:

lsmod | grep drbd

The expected output is something like:

drbd 191530 4

lru_cache 12880 1 drbd

cn 12933 1 drbd

Most online tutorials instruct you to edit ‘/etc/drbd.conf’. I’d suggest not to touch that file and create one in /etc/drbd.d/ instead. That way, your changes are never overwritten and it’s clear what local changed you made.

vim /etc/drbd.d/redundantstorage.res

Enter this configuration:

resource redundantstorage {

protocol C;

startup { wfc-timeout 0; degr-wfc-timeout 120; }

disk { on-io-error detach; }

on storage-server0.example.org {

device /dev/drbd0;

disk /dev/sda3;

meta-disk internal;

address 10.10.0.86:7788;

}

on storage-server1.example.org {

device /dev/drbd0;

disk /dev/sda3;

meta-disk internal;

address 10.10.0.88:7788;

}

}

Make sure your hostnames match the hostnames in this config file as it will not work otherwise. To see the current hostname, run:

uname -n

Modify /etc/hosts, /etc/resolv.conf and/or /etc/hostname to your needs and do not continue until the actual hostname matches the one you set in the configuration above.

Also, make sure you did all the steps so far on both servers.

It’s now time to initialise the DRBD device:

drbdadm create-md redundantstorage

drbdadm up redundantstorage

drbdadm attach redundantstorage

drbdadm syncer redundantstorage

drbdadm connect redundantstorage

Run this on the primary server only:

drbdadm -- --overwrite-data-of-peer primary redundantstorage

Monitor the progress:

cat /proc/drbd

Start the DRBD service on both servers:

service drbd start

You now have a raw block device on /dev/drbd0 that is synced from the primary to the secondary server.

Using the DRBD device

Let’s create a filesystem on our new DRBD device. I prefer using LVM since that makes it easy to manage the partitions later on. But you may also simply use the /dev/drbd0 device as any block device on its own.

Initialize LVM2:

pvcreate /dev/drbd0

pvdisplay

vgcreate redundantstorage /dev/drbd0

We now have a LVM2 volume group called ‘redundantstorage’ on device /dev/drbd0

Create the desired LVM partitions on it like this:

lvcreate -L 1T -n web_files redundantstorage

lvcreate -L 250G -n other_files redundantstorage

The partitions you create are named like the volume group. You can now use ‘/dev/redundantstorage/web_files’ and ‘/dev/redundantstorage/other_files’ like you’d otherwise use ‘/dev/sda3’ etc.

Before we can actually use them, we need to create a file system on top:

mkfs.ext4 /dev/redundantstorage/web_files

mkfs.ext4 /dev/redundantstorage/other_files

Finally, mount the file systems:

mkdir /redundantstorage/web_files

mkdir /redundantstorage/other_files

mount /dev/redundantstorage/web_files /redundantstorage/web_files

mount /dev/redundantstorage/other_files /redundantstorage/other_files

Using the DRBD file systems

Two more steps are needed to set up before we can test our new redundant storage cluster: Heartbeat to manage the cluster and NFS to make use of it. Let’s start with NFS, so Heartbeat will be able to manage that late on as well.

To install NFS server, simply run:

apt-get install nfs-kernel-server

Then setup what folders you want to export using your NFS server.

vim /etc/exports

And enter this configuration:

/redundantstorage/web_files 10.10.0.0/24(rw,async,no_root_squash,no_subtree_check,fsid=1)

/redundantstorage/other_files 10.10.0.0/24(rw,async,no_root_squash,no_subtree_check,fsid=2)

Important:

Pay attention to the the ‘fsid’ parameter. It is really important because it tells the clients that the file system on the primary and secondary are both the same. If you omit this parameter, the clients will ‘hang’ and wait for the old primary to come back online after a fail over happens. Since this is not what we want, we need to tell the clients the other server is simply the same. Fail-over will then happen almost without notice. Most tutorials I read do not tell you about this crucial step.

Make sure you have all this setup on both servers. Since we want Heartbeat to manage our NFS server, we need not to start NFS on boot. To do that, run:

update-rc.d -f nfs-common remove

update-rc.d -f nfs-kernel-server remove

Basic Heartbeat configuration

Install the heartbeat packages is simple:

apt-get install heartbeat

If you’re on CentOS, have a look at the EPEL repository. I’ve successfully setup Heartbeat with those packages as well.

To configure Heartbeat:

vim /etc/ha.d/ha.cf

Enter this configuration:

autojoin none

auto_failback off

keepalive 2

warntime 5

deadtime 10

initdead 20

bcast eth0

node storage-server0.example.org

node storage-server1.example.org

logfile /var/log/heartbeat-log

debugfile /var/log/heartbeat-debug

I set ‘auto_failback’ to off, since I do not want another fail-over when the old primary comes back. If your primary server has better hardware than the secondary one, you may want to set this to ‘on’ instead.

The parameter ‘deadtime’ tells Heartbeat to declare the other node dead after this many seconds. Heartbeat will send a heartbeat every ‘keepalive’ number of seconds.

Protect your heartbeat setup with a password:

echo "auth 3

3 md5 your_secret_password

" > /etc/ha.d/authkeys

chmod 600 /etc/heartbeat/authkeys

You need to select an ip-address that will be your ‘service’-address. Both servers have their own 10.10.0.x ip-address, so choose another one in the same range. I use 10.10.0.10 in this example. Why we need this? Simply because you cannot know to which server you should connect. That’s why we will instruct Heartbeat to manage an extra ip-address and make that alive on the current primary server. When clients connect to this ip-address it will always work.

In the ‘haresources’ file you describe all services Heartbeat manages. In our case, these services are:

– service ip-address

– DRBD disk

– LVM2 service

– Two filesystems

– NFS daemons

Enter them in the order they need to start. When shutting down, Heartbeat will run them in reversed order.

vim /etc/ha.d/haresources

Enter this configuration:

storage-server0.example.org \

IPaddr::10.10.0.10/24/eth0 \

drbddisk::redundantstorage \

lvm2 \

Filesystem::/dev/redundantstorage/web_files::/redundantstorage/web_files::ext4::nosuid,usrquota,noatime \

Filesystem::/dev/redundantstorage/other_files::/redundantstorage/other_files::ext4::nosuid,usrquota,noatime \

nfs-common \

nfs-kernel-server

Use the same Heartbeat configuration on both servers. In the ‘haresources’ file you specify one of the nodes to be the primary. In our case it’s ‘storage-server0’. When this server is or becomes unavailable, Heartbeat will start the services it knows on the other node, ‘storage-server1’ in this case (as specified in the ha.cf config file).

Wrapping up

DRBD combined with Heartbeat and NFS creates a powerful, redundant storage solution all based on Open Source software. When using the right hardware you will be able to achieve great performance with this setup as well. Think about RAID controllers with SSD-cache and don’t forget the Battery Backup Unit so you can enable the Write Back Cache.

Enjoy building your redundant storage!