I was the Release Manager for Apache CloudStack versions 4.6, 4.7 and 4.8 and during this time many people told me they thought it’s hard to build and package Apache CloudStack. The truth is, it’s not that hard once you know the steps to take. 😉

I was the Release Manager for Apache CloudStack versions 4.6, 4.7 and 4.8 and during this time many people told me they thought it’s hard to build and package Apache CloudStack. The truth is, it’s not that hard once you know the steps to take. 😉

Next to that there’s the release pace. You may want to move in a different pace than the project is. There’ve been lots of discussions for example on a fast release cycle, and also on LTS-releases on the other hand. In this blog post I’ll show you how easy it is to create your own CloudStack release to satisfy the demands of your organisation.

Maven

Compiling Apache CloudStack is done using Maven. You need to install this tool in order to work with releases so let’s do it. Let’s assume a CentOS 7 box you want to compile this on. It should pretty much work on any OS.

yum install maven java-1.8.0-openjdk mkisofs ws-commons-util genisoimage gcc

We also install Java and some tools needed to compile Apache CloudStack.

Versioning

When you’re building your own release of Apache CloudStack, you have two options:

- Rebuild an existing version

- Create a new version

You’ve to keep in mind that when you create a new version, you need also to create so-called upgrade-paths: how the database can be upgraded to your version. When you choose option 1, and rebuild an existing version, this is not necessary. This sounds easy, but on the other hand, it’s confusing as there’s no way to tell the difference later on.

Apache CloudStack works with versions like 4.8.0 and 4.7.1, etc. The upgrade mechanism will only consider the first 3 parts. This means, we are free to create 4.8.0.16 (our 16th custom version of 4.8.0) as long as we do not touch the database. That sounds like a nice way to make custom daily releases, and at the same time can be used for those wanting to build LTS (Long Term Support) versions.

Setting the version

The question then is, how can we modify the version of Apache CloudStack? Well, there’s actually a tool supplied in the source that does this for you. It’s called setnextversion.sh. Here’s how it works:

usage: ./tools/build/setnextversion.sh -v version [-b branch] [-s source dir] [-h] -v sets the version -b sets the branch (defaults to 'master') -s sets the source directory -h

To build our custom 4.8.0.16 version off the 4.8 branch we run:

./tools/build/setnextversion.sh -v 4.8.0.16 -b 4.8 -s /data/git/cs1/cloudstack/

Output shows a lot of things, most interesting:

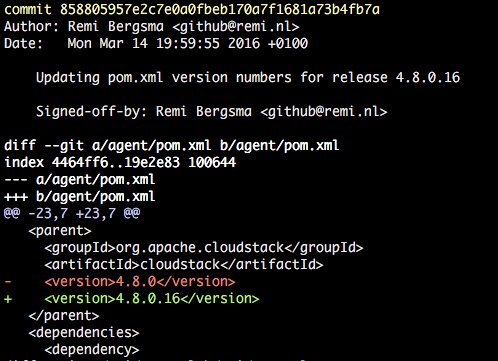

found 4.8.0 setting version numbers [master 27fa04a] Updating pom.xml version numbers for release 4.8.0.16 126 files changed, 130 insertions(+), 130 deletions(-) committed as 858805957e2c7e0a0fbeb170a7f1681a73b4fb7a

The result is a new commit that changed the versions in the POMs. You an see it here:

git log

You’ve successfully set the version!

Compiling the custom version

Let’s compile it. This works like with any release build:

mvn clean install -P systemvm

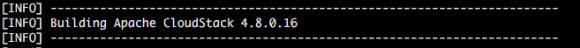

This will take a few minutes. You should see your new version flying by lots of times.

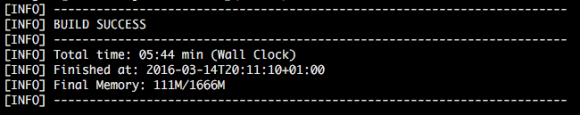

After a few minutes the build completes.

By the way, if you want to skip the unit tests to speed up the process, add -DskipTests to the mvn command.

RPM packages

The source also contains scripts to build packages. To build packages for CentOS 7 for example, do this:

cd ./packaging ./package.sh -d centos7

You should see this:

Preparing to package Apache CloudStack 4.8.0.16

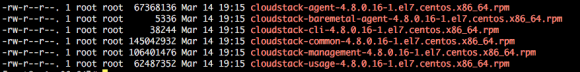

When the script finishes, you can find the RPM packages here:

ls -la ../dist/rpmbuild/RPMS/x86_64/

Installing the new version

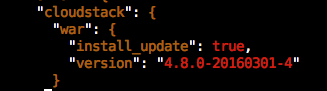

You can either use the war that is the result of the compile, or install the generated RPM packages. Installing and upgrading is out-of-scope in this blog, so I assume you know how to install a stock version of CloudStack. I first installed a stock 4.8.0 version, then (as shown above) built 4.8.0.16 and will show the upgrade.

As soon as you start the management server with the new version you will see this:

2016-03-14 12:34:09,986 DEBUG [c.c.u.d.VersionDaoImpl] (main:null) (logid:) Checking to see if the database is at a version before it was the version table is created 2016-03-14 12:34:09,995 INFO [c.c.u.DatabaseUpgradeChecker] (main:null) (logid:) DB version = 4.8.0 Code Version = 4.8.0.16 2016-03-14 12:34:09,995 INFO [c.c.u.DatabaseUpgradeChecker] (main:null) (logid:) DB version and code version matches so no upgrade needed.

This is actually very nice. We made a custom version and CloudStack still assumes it’s 4.8.0 and so no upgrade of the data base is needed. This obviously means that you cannot do this when your patch requires a data base change.

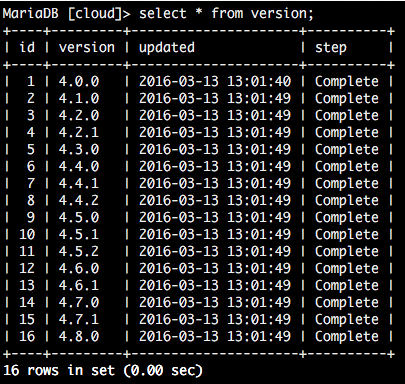

When we look at the database, we can confirm we still run a 4.8.0-compatible release:

From this table, one cannot tell we upgraded to our custom version. But when you look closer, the new version is active.

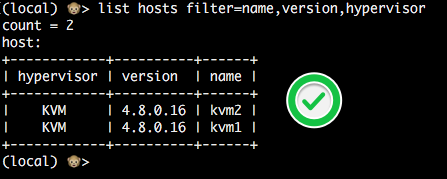

This is the version an agent reports:

Also, the UI will show the custom version in the About box. This way users can easily tell what version they are running.

Conclusion

Creating your own custom version of Apache CloudStack may sound complicated but we’ve seen it’s pretty easy to do so. Creating a custom release will provide you with a lot of flexibility, especially if you combine it with war dropping. By numbering your version like 4.8.0.x you don’t have to worry about upgrade paths.

Happy releasing!

Upgrading

Upgrading

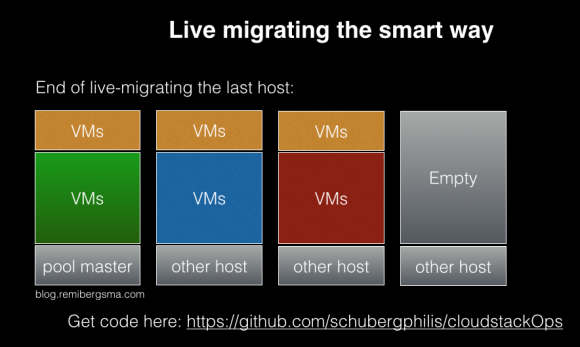

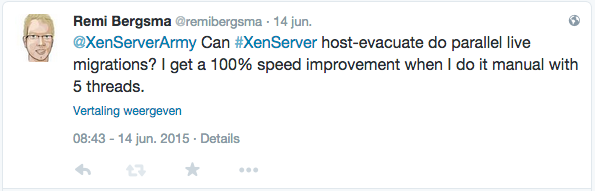

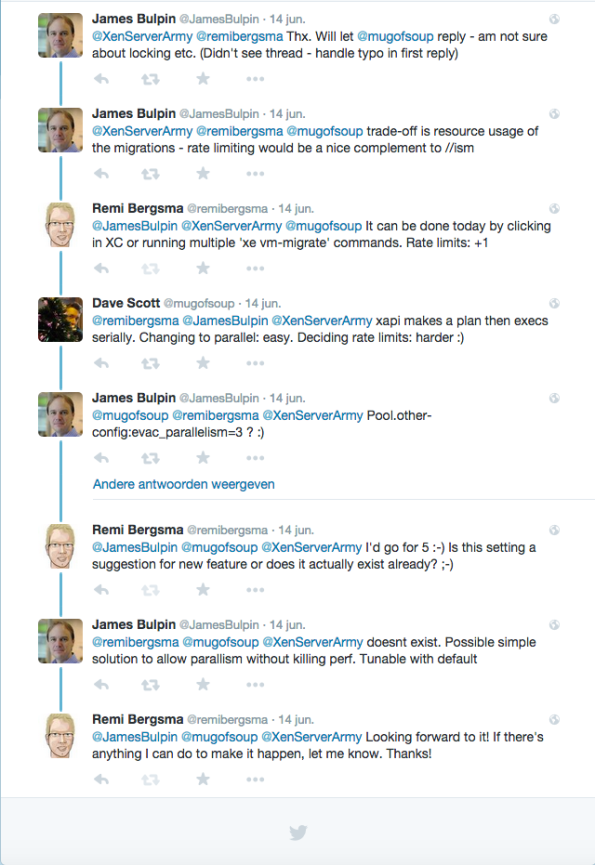

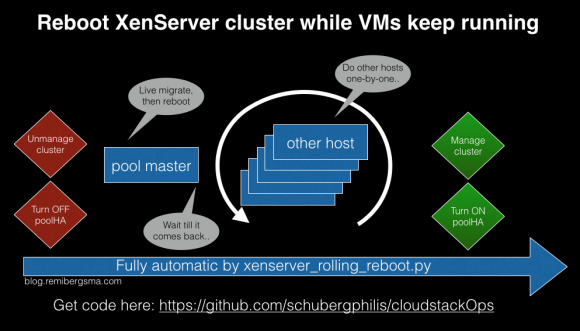

CloudStackOps

CloudStackOps