I’m using CloudStack for some months now and our cloud is close to going live. It’s an awesome piece of software that is just rock solid :-). One thing I couldn’t really find is how to create high available management servers with automatic failover. I’ve come up with a solution that I’ll share in this blog post.

From the CloudStack manual:

The CloudStack Management Server should be deployed in a multi-node configuration such that it is not susceptible to individual server failures. (…) MySQL may be configured to use replication to provide for a manual failover in the event of database loss.

Of course, when building a cloud one cannot just have one management server, as that would create a big single-point-of-failure . Even though there is no impact on already running VM’s, you and your customers, for example, won’t be able to stop and start VM’s. The manual suggests looking into “MySQL replication” and when problems occur, “manually failover” to another server.

How does that work? The management server itself is stateless, which means you can have two management servers and if you’d issue a command to either of them, the result would be the same. You can distribute the load, it just doesn’t matter which management server you’ll talk to. So there’s no master nor slave: they’re just all the same. The challenge is where CloudStack stores its data: in a MySQL server. We should have one MySQL master server that handles the requests from the management servers. MySQL supports replication, which means you can add MySQL slave servers that would just stay in sync with the master using the binary logs. You cannot query them directly, that’s what the master is for. When the master dies, you can promote a slave to be the new master. But this is a manual step to take.

Personally, I’d like to automate this. Further more, my experience with MySQL master/slave in the past, is that it isn’t rock solid. Sometimes slaves would get out of sync due to some error. You at least need some monitoring to warn you when this happens. It is almost always possible to fix this, but again this is manual work and I was looking for an automatic solution. So I came up with an alternative..

Since 2005 I’m building Linux clusters at work for our webhosting and e-mail business. Using Open Source techniques, that is. One of the things I’ve been using for years is DRBD. You can think of DRBD as a network based RAID-1 mirror. Using a dedicated high speed network (Gigabit or better), DRBD keeps two disks in sync. Using another Open Source tool, Heartbeat, one can automatically fail-over from one server to another and keep the service online. Heartbeat and DRBD have a sub-second failover and in case of a complete (power) failure of the hardware, the automatic failover takes just 10 seconds. Now that’s cool!

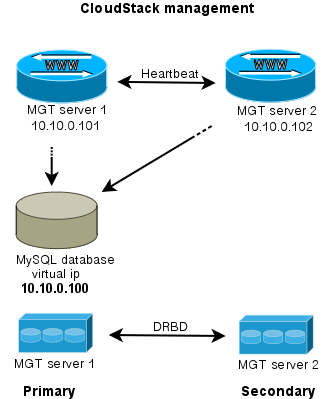

How can this help solve the management server issue? Imagine two management servers, that use DRBD to keep a given disk in sync. This disc is mounted on /var/lib/mysql on the management server that is primary. It is not mounted on the secondary management server. Heartbeat makes sure MySQL is only run on the primary server. To make it all easy to manage, Heartbeat also takes care of an extra ip-addres that is always mounted on the primary server. We call this the “virtual ip-address”. It looks like this:

Wat do we have then? Two management servers, both run the CloudStack management software and can be used to visit the webserver, call the API etc. Both management servers use the MySQL server which is run on the primary server. Tell CloudStack to use the “virtual ip-address” as MySQL host address. DRBD will make sure the stand-in server has an up-to-date version of the MySQL disk.

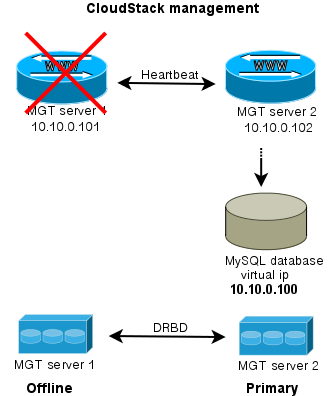

If the secondary server dies, nothing happens apart from losing redundancy. What if the primary server fails?

When either server goes offline, the MySQL disk and MySQL service is run on the server that is still alive. Of course also the CloudStack management is still available then. This way, you have an automatic failover for the CloudStack management server.

To extend this setup, one could easily setup a loadbalancer that distributes the traffic between the management servers. Both keepalived and haproxy can do that for you.

I hope this brings some inspiration to others working with CloudStack. If you’ve suggestions, questions or improvements let me know!